Luminance (in)stability in OLED monitors

Topic

It is well known that OLED monitors down-regulate image luminance if, otherwise, the average image luminance would exceed a limit that cannot be handled by the monitor on the long run. However, this is not what shall be discussed here, which is the luminance stability when the monitor is not only set to the "uniform brightness" mode but is even operated well below the maximum luminance specified for the uniform brightness mode. One major difference between LCD and OLED technology is that, in LCD monitors, the light comes from a constant backlight and is just modulated by the pixel cells, whereas in OLED monitors, the light is directly generated by the pixels – on demand, so to speak. Assuming that the bulk of the monitor's energy consumption is spent on generating the light, the energy demand in an LCD is rather constant and much easier to control than in an OLED monitor, where energy demand is tightly coupled to the image content and can rapidly change from one image frame to the next. Moreover, this control of the overall OLED current not only has to be fast but also very accurate, given that there is no averaging out of errors across the OLED pixels – a 1% error in the overall current directly results in a 1% pixel luminance error for each single pixel. The practical relevance of potentially unstable luminance totally depends on the application, e.g. how fast and to what extent the (intended) average image luminance is changing, and how stable the luminance of a potential target stimulus has to be.

Luminance measurement method

A monochrome industry camera (IDS UI-3360CP-NIR, 2048x1088 pixels, 2/3" monochrome CMOS sensor) was used for luminance recording, which allowed to comfortably capture an ROI of 1024x1024 camera pixels with 10 bit gray-scale resolution at ~65 Hz. A 25 mm lens was used with a 20 mm C-mount distancer and a short rubber sleeve (diameter <35mm), which allowed the camera to be brought so close to the screen that a 5·5 mm target patch almost filled the ROI when slightly out of focus. The rubber sleeve was touching the screen and safely shadowed ambient light.

The target was de-focused to help blurring the subpixel structure and thereby equalizing the camera pixel values. This allowed to choose a bigger lens aperture (f≈4) without saturating camera pixels. The camera was operated in freerun mode with the maximum exposure duration and a gain factor of 1. The camera's image frequency was set to 63.85 Hz, which is intentionally different from 60 Hz for avoiding phase-locking either to the monitor frequency, which was set to 120 Hz (i.e., k·60 Hz), or to the final sampling frequency of 4 Hz that was used for further analysis of the luminance signal. The stimulus presentation and final luminance sampling followed a 4 Hz clock. In essence, each 4 Hz sampling cycle started with synchronously updating the screen image buffer, whether the image content actually changed or not. Camera image samples that happened to fall in this sampling cycle were averaged. Of course, the details were more complicated than that, because camera data arrived with a delay and some camera images had to be ignored which either were recorded before the screen content had settled or which might have been contaminated already by the subsequent screen image update. These image exclusion criteria were interpreted independently of whether the screen content really changed, which made the 4 Hz samples more comparable in terms of how many camera images went into each final luminance sample. The potential settling time after a screen image update, which might also include processing time uncertainties, was set differently depending on monitor technology (e.g., a generous 10 ms for OLED monitors, or 40 ms for the (fast) TN and IPS monitors).

In order to maximize the signal-to-noise ratio (SNR), the camera image pixels were weighted according to their potential signal contribution. The weight mask was derived from a slightly blurred reference image recorded for a white target on a black background at the beginning of each test. However, such pixel weighting is only beneficial if the image position is very stable over the entire measurement time. Therefore, the exact image position was continuously checked for potential image position shifts. This was also useful for detecting pixel shift that some OLED monitors exhibit as part of their OLED care feature and which would invalidate measurements with small targets. The target was always surrounded by a black background with a 35 mm diameter to ensure, thanks to the lens rubber sleeve, that the camera image could not be affected by potential stray light coming from the room lighting and from the potentially changing background patterns.

Test stimuli

There were several test, each with its specific stimulus configuration comprising of a measured target at the screen center and the background covering the rest of the screen. In principle, the measured target be either small or big, and its luminance could be constant over time or changing. The background either changed between different static patterns (see Figure 1), or it was just all black or all mid-gray throughout, or it was slowly ramped up from black to white and then from white to black.

Test protocol

The entire test sequence was run automatically, but the single tests were otherwise independent, except for the camera adjustment, which was only done before starting the first test. Before each test, a quick gamma curve was measured, basically for inferring the pixel values that needed to be programmed for mid-gray (i.e., 50% of the maximum luminance). Furthermore, a reference image was captured which served several purposes: documentation, image position shift detection, and defining camera image pixel weights for SNR optimization when averaging over camera image pixels (as described above).

For each test, 5 repetitions of 2 to 4 minute long sweeps were measured. The stimulus sequence within a sweep, even if randomized, was exactly the same for all sweep repetitions (and for all monitors). For example, if the background was changed randomly from time to time, this random sequence of background patterns and the duration of each pattern was kept the same for all sweeps for all monitors. Before each sweep, a black screen was presented for 10 seconds, which was supposed to somewhat reset the state of the screen pixels and monitor's control electronics. For one monitor, the data collection took about 37 minutes.

Analysis

Very slow luminance changes are of no interest here, which is why luminance drift was removed from each sweep. The drift was modeled by a smoothing quadratic spline with support points about every 60 s. For 2-minute sweeps, for example, this resulted in 4 coefficients per sweep, which included the coefficients for the boundary conditions at the sweep start and end. Because such drift model is too flexible for preserving systematic luminance changes which are of interest in the BgRamp+TgtSmallWhite test (see below), the spline-based drift model was replaced by a simple linear drift model for this specific test.

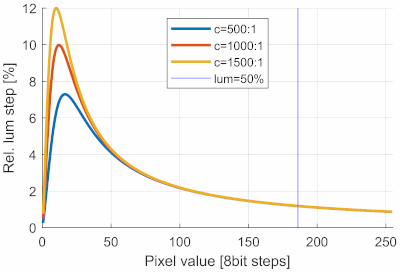

The blue vertical line indicates where the luminance reaches 50% of the maximum luminance. All tests use target luminances higher than 50%, where the relative luminance step sizes are about 1% and are essentially independent of the monitor's white contrast.

Also of no interest are the absolute luminance levels, which is why the luminance error is expressed as relative luminance error, i.e., the difference between the measured and the expected luminance, divided by the expected luminance. The expected luminance was defined as the average luminance across all measurements taken within a sweep for the respective target luminance. This definition of relative luminance error closely reflects what is also perceptually relevant; a 1% relative luminance error is perceptually as large in dark stimuli as in bright stimuli, even though the corresponding absolute luminance errors differ.

For the quantitative evaluation of the relative luminance error values, a comparison with the relative luminance step size in a gamma transfer function might be useful. Unfortunately, the step sizes depend on the assumed gamma value, white-contrast, and pixel value. Figure 1 shows curves for gamma=2.2 and three different contrast levels. For the test described here, which mostly use a 100%-white target or at least a target with a luminance above 50% of maximum white, an 8 bit step corresponds to a relative step size of roundabout 1%. A uniform distribution of an according luminance error would have an SD of 1%/sqrt(12) ≈ 0.3%. This means that, at least for higher pixel values, the relative luminance error just caused by 8 bit quantization is 0.3%. However, this a theoretical value and does not include round-off noise introduced by the color processing in the monitor.